Blog

2023-09-02

This week, I've been at Talking Maths in Public (TMiP) in Newcastle. TMiP is a conference for anyone involved

in—or interested in getting involved in—any sort of maths outreach, enrichment, or public engagement activity. It was really good, and I highly recommend coming to TMiP 2025.

The Saturday morning at TMiP was filled with a choice of activities, including a puzzle hunt written by me: the Tyne trial.

At the start/end point of the Tyne trial, there was a locked box with a combination lock. In order to work out the combination for the lock, you needed to find some clues hidden around

Newcastle and solve a few puzzles.

Every team taking part was given a copy of these instructions.

Some people attended TMiP virtually, so I also made a version of the Tyne trial that included links to Google Street View and photos from which the necessary information could be obtained.

You can have a go at this at mscroggs.co.uk/tyne-trial/remote. For anyone who wants to try the puzzles without searching through virtual Newcastle,

the numbers that you needed to find are:

- Clue #1: \(a\) is 9.

- Clue #2: \(b\) is 5.

- Clue #3: \(c\) is 1838.

- Clue #4: \(d\) is 1931.

- Clue #5: \(e\) is 1619.

- Clue #6: \(f\) is 48.

- Clue #7: \(g\) is 1000.

The solutions to the puzzles and the final puzzle are below. If you want to try the puzzles for yourself, do that now before reading on.

Puzzle for clue #2: Palindromes

We are going to start with a number then repeat the following process: if the number you have is a palindrome, stop;

otherwise add the number to itself backwards.

For example, if we start with 219, then we do: $$219\xrightarrow{+912}1131\xrightarrow{+1311}2442.$$

If you start with the number \(10b+9\) (ie 59), what palindrome do you get?

(If you start with 196, it is unknown whether you will ever get a palindrome.)

Puzzle for clue #3: Mostly ones

There are 12 three-digit numbers whose digits are 1, 2, 3, 4, or 5 with exactly two digits that are ones.

How many \(c\)-digit (ie 1838-digit) numbers are there whose digits are 1, 2, 3, 4, or 5 with exactly \(c-1\) digits (ie 1837) that are ones?

Puzzle for clue #4: is it an integer?

The largest value of \(n\) such that \((n!-2)/(n-2)\) is an integer is 4. What is the largest value of \(n\) such that

\((n!-d)/(n-d)\) (ie \((n!-1931)/(n-1931)\)) is an integer?

Puzzle for clue #5: How many steps?

We are going to start with a number then repeat the following process:

if we've reached 0, stop; otherwise subtract the smallest prime factor of the current number.

For example, if we start with 9, then we do: $$9\xrightarrow{-3}6\xrightarrow{-2}4\xrightarrow{-2}2\xrightarrow{-2}0.$$ It took 4 steps to get to 0.

What is the smallest starting number such that this process will take \(e\) (ie 1619) steps?

Puzzle for clue #6: Four-digit number

I thought of a four digit number. I removed a

digit to make a three digit number, then added my two numbers together.

The result is \(200f+127\) (ie 9727). What was my original number?

Puzzle for clue #7: Dice

If you roll two six-sided fair dice, the most likely total is 7. What is the most likely total if you rolled \(1470+g\) (ie 2470) dice?

The final puzzle

The final puzzle involves using the answers to the five puzzles to find the four digit code that

opens the box (and the physical locked box that was in the library on

Saturday. To give hints to this code, each clue was given a "score".

The score of a number is the number of values of \(i\) such that the \(i\)th digit

of the code is a factor of the \(i\)th digit of the number.

For example, if the code was 1234, then the score of the number 3654 would be 3 (because

1 is a factor of 3; 2 is a factor of 6; and 4 is a factor of 4).

The seven clues to the final code are:

- Clue #1: 6561 scores 2 points.

- Clue #2: 1111 scores 0 points.

- Clue #3: 7352 scores 1 points.

- Clue #4: 3562 scores 1 points.

- Clue #5: 3238 scores 1 points.

- Clue #6: 8843 scores 1 points.

- Clue #7: 8645 scores 3 points.

(Click on one of these icons to react to this blog post)

You might also enjoy...

Comments

Comments in green were written by me. Comments in blue were not written by me.

Add a Comment

Image: Chalkdust Magazine

Chalkdust issue 17

2023-05-22

For the past couple of months, I've once again been spending an awful lot of my spare time working

on Chalkdust. Today you can see the result of all this hard work: Chalkdust issue 17.

I recommend checking out the entire magazine: you can read it online

or order a physical copy.

My most popular contribution to the magazine is probably the crossnumber.

I enjoyed writing this one; hope you enjoy solving it.

I also spent some time making this for the back page of the magazine. It's probably the most

fun I've had making something stupid for Chalkdust for ages.

Chalkdust Magazine

(Click on one of these icons to react to this blog post)

You might also enjoy...

Comments

Comments in green were written by me. Comments in blue were not written by me.

Add a Comment

2023-04-12

If, like me, you grew up in the 90s, then one of your earliest experiences of programming was

probably using Logo. In Logo, you use various commands to move a "turtle" around the screen. As the turtle moves,

it can draw lines. I have a very strong memory from early secondary school of spending a few maths

lessons in the computer room writing Logo programs to draw isometric houses.

If you read this blog regularly, you'll probably have noticed that I'm a fan of the game

Asteroids. Over the last few weeks, I've been working on Logo Asteroids,

a version of Asteroids where you control your spaceship using Logo commands. You can play this game

at mscroggs.co.uk/logo.

If you've not used Logo before, or if it's been so long since you have that you've forgotten the commands,

this blog post will guide you through how to get started. If you're confident in your Logo skills,

you might still want to scroll to the end of this post, where I share some custom Logo commands that you

might find helpful.

The game's source code is available on Github;

you can also use the GitHub issue tracker to report bugs and make feature requests.

Moving the turtle

If you're starting out in Logo, the first thing you'll want to do is move the turtle.

You can move it forwards or backwards using the commands fd or

bk followed by a number of pixels:

Logo

fd 100

bk 75

To change the direction in which the turtle is facing, you can use the command rt (right)

or lt (left) followed by an angle in degrees:

Logo

rt 90

lt 45

You can also chain together multiple commands on a single line like this:

Logo

fd 100 rt 90 fd 100 lt 90

By default, a line is drawn whenever the turtle moves forward or backwards. You can stop lines from being

drawn by running the command pu (pen up). To start drawing again, run

pd (pen down). If you want to make the game needlessly harder for yourself, you can use the command ht (hide turtle). To make the game easier again, run st (show turtle).

In a normal Logo program, the lines that you draw will stay there until you clear the screen (cs). In Logo Asteroids, the lines will only be visible for a limited amount

of time, and the asteroids will bounce off them while they are visible. To prevent too many lines from being drawn too quickly,

the turtle can move a maximum of 1000 pixels in a single command (or chain of commands).

Special commands for Logo Asteroids

As well as the Logo commands, there are some commands I have included that are specific to Logo

Asteroids. These are:

Logo

start

fire

help

The command start will start the game. You'll need to run this before you can run any other commands. You'll also need to run it to start the game again if you

run out of lives.

The command fire will fire at the asteroids. This command can be run

a maximum of 10 times by a single chain of commands.

The command help will show details of all the available commands below the game.

Defining your own subroutines

If you write some Logo commands that you want to use lots of times, use can use the to command to define a subroutine.

For example, the following code defined a command called square that will draw a square of side length 50.

Logo

to square fd 50 rt 90 fd 50 rt 90 fd 50 rt 90 fd 50 rt 90 end

The subroutine can then be used by running:

Logo

square

The square subroutine can be simplified by using the repeat command:

Logo

to square repeat 4 [fd 50 rt 90] end

... or it could be made to take a side length as input:

Logo

to square :side repeat 4 [fd :side rt 90] end

This updated version of the square subroutine can then be used by running:

Logo

square 50

square 100

square 125

Helpful subroutines for Logo Asteroids

To help you get going with Logo Asteroids, I've written a few subroutines that you might find helful. See if you

can work out what they do before running them.

Logo

to burst repeat 10 [fire rt 36] end

to protect pu fd 30 pd rt 135 repeat 4 [fd 30 * sqrt 2 rt 90] lt 135 pu bk 30 pd end

to multifire rt 10 repeat 10 [fire lt 2] rt 10 end

If you write your own helpful subroutine, share it in the comments below. You can put <logo> and </logo> HTML tags around your subroutine to make it display more nicely in your comment.

To end this blog post, here's one final subroutine for you to try out:

Logo

to house pu setxy 400 225 seth 0 pd lt 30 fd 40 lt 60 fd 40 lt 120 fd 40 lt 60 fd 40 rt 120 fd 50 rt 60 fd 40 rt 120 fd 50 setxy 400 + 20 * cos 30 155 rt 180 fd 50 setxy 400 - 50 * cos 30 160 pu setxy 400 + 20 * cos 30 155 pd setxy 400 + 40 * cos 30 165 pu setxy 400 225 lt 60 pd fd 50 rt 60 fd 50 rt 120 fd 50 pu rt 60 fd 20 pd lt 120 fd 20 rt 120 fd 10 rt 60 fd 20 rt 60 fd 50 pu rt 60 fd 10 pd rt 120 fd 50 ht end

(Click on one of these icons to react to this blog post)

You might also enjoy...

Comments

Comments in green were written by me. Comments in blue were not written by me.

Very cool! Here's a combination of your "burst" and "protect" subroutines:

to f pu sety 195 setx 390 pd repeat 10 [fire fd 20 rt 36] pu home end

Aaron

I didn't include this one in the blog post, but here's a bonus fun command:

to randwalk repeat 100 [rt random 360 fd 10] end

Matthew

Add a Comment

2023-03-14

A circle of radius \(r\) on a piece of paper can be thought of as a line through all the points on the paper that are a distance \(r\) from the centre of the circle.

The length of this line (ie the circumference) is \(2\pi r\).

Due to the curvature of the Earth, the line through all the points that are a distance \(r\) from where you are currently standing will be less than \(2\pi r\) (although you might

not notice the difference unless \(r\) is very big). The type of curvature that a sphere has is often called positive curvature.

It is also possible to create surfaces that have negative curvature; or surfaces where the length of the line through all the points that are a distance \(r\) from a point is more than \(2\pi r\).

These surfaces are called hyperbolic surfaces.

Hyperbolic surfaces

The most readily available example of a hyperbolic surface is a Pringle: Pringles are curved upwards in one direction, and curved downwards in the other direction.

It's not immediately obvious what a larger hyperbolic surface would look like, but you can easily make one if you know how to crochet: simply increase the number of stitches at a

constant rate.

If it was possible to continue crocheting forever, you'd get an unbounded hyperbolic surface: the hyperbolic plane.

Over the last few weeks, I've been working on adding hyperbolic levels to Mathsteroids, the asteroids-inspired game that I started making levels for in 2018.

There are quite a few different ways to represent the hyperbolic plane in 2D. In this blog post we'll take a look at some of these; I encourage you to play the Mathsteroids level for each one as you

read so that you can get a feeling for their behaviour.

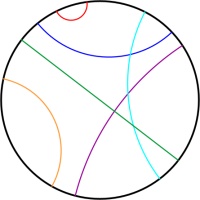

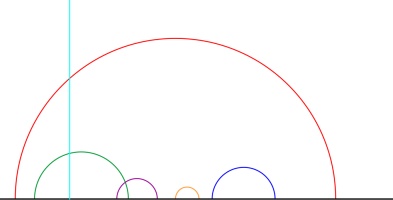

Poincaré disk

The Poincaré disk represents the hyperbolic plane as the interior of a circle. As objects move away from the centre of the circle, they appear smaller and smaller; and the circumference of

the circle is infinitely far from the centre of the circle. Straight lines on the hyperbolic plane appear on the Poincaré disk as either circles that meet the edge of the disk at right angles,

or straight lines through the centre of the circle.

One of the nicest properties of the Poincaré disk is that it correctly represents angles: If you measure the angle between to intersecting straight lines represented on the disk, then the value

you measure will be the same as the angles between the two lines in the actual hyperbolic plane.

This representation can be used to demonstrate some interesting properties of the hyperbolic plane.

In normal two dimensional space, if you are given a line and a point then there is exactly one line that goes through the point and is parallel to the first line.

But in hyperbolic space, there are many such parallel lines.

In Mathsteroids, there are two levels based on the Poincaré disk. The first level is bounded: you can only fly so far from the centre of the circle before

you hit the edge of the level and bounce back. (This prevents you from getting lost in the part of the disk where you would appear very very small.)

The second level is unbounded. In this level, you can fly as far as you like in any direction, but the point which is at the centre of the

disk will change when you go too far to prevent the ship from getting to small.

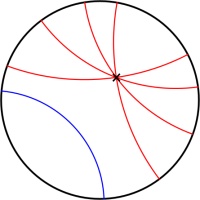

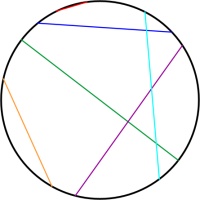

Beltrami–Klein disk

Simlar to the Poincaré disk, the Beltrami–Klein disk represents the hyperbolic plane using the interior of a circle, with the edge of the circle infinitely far away. Straight lines

in hyperbolic space will appear as straight lines in the Beltrami–Klein disk. The Beltrami–Klein disk is closely related to the Poincaré disk: the same line represented on both disks

will have its endpoints at the same points on the circle (although these endpoints are arguably not part of the line as they are on the circle that is infinitely far away).

Unlike in the Poincaré disk, angles are not correctly represented in the Beltrami–Klein disk. It can however be a very useful representation as straight lines appearing as straight lines

is a helpful feature.

Again, there are two Mathsteroids levels based on the Beltrami–Klein disk: a bounded level and an unbounded level.

Poincaré half-plane

The Poincaré half-plane represents the hyperbolic plane using the half-plane above the \(x\)-axis. Straight lines in hyperbolic space are represented by either vertical

straight lines or semicircles with their endpoints on the \(x\)-axis.

Like the Poincaré disk, the Poincaré half-plane correctly represents the angles between lines.

There is one Mathsteroids level based on the Poincaré half-plane: this level is bounded so you can only travel so far away from where you start.

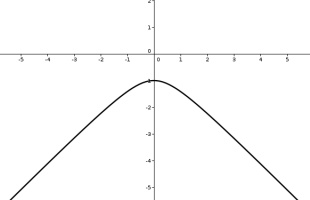

Band

The band representation represents the hyperbolic plane as the strip between two horizontal lines. The strip extends forever to the left and right.

Straight lines on the band come in a variety of shapes.

There is one Mathsteroids level based on the band representation: the bounded level.

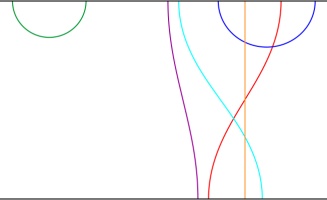

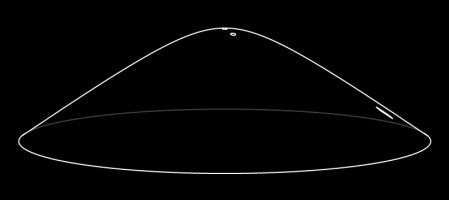

Hyperboloid and Gans plane

The hyperbolic plane can be represented on the surface of a hyperboloid. A hyperboloid is the shape you get if you rotate and hyperbola (a curve shaped like \(y=1/x\).)

Straight lines on this representation are the intersections of the hyperboloid with flat planes that go through the origin.

You can see what these straight lines look like by playing the (bounded) Mathsteroids level based on the hyperboloid.

The Gans plane is simply the hyperboloid viewed from above. There is a (bounded) Mathsteroids level based on the Gans plane.

(Click on one of these icons to react to this blog post)

You might also enjoy...

Comments

Comments in green were written by me. Comments in blue were not written by me.

Add a Comment

2023-02-03

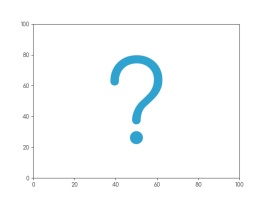

Imagine a set of 142 points on a two-dimensional graph.

The mean of the \(x\)-values of the points is 54.26.

The mean of the \(y\)-values of the points is 47.83.

The standard deviation of the \(x\)-values is 16.76.

The standard deviation of the \(y\)-values is 26.93.

What are you imagining that the data looks like?

Whatever you're thinking of, it's probably not this:

This is the datasaurus, a dataset that was created by Alberto Cairo in

2016 to remind people to look beyond the summary statistics when analysing a dataset.

Anscombe's quartet

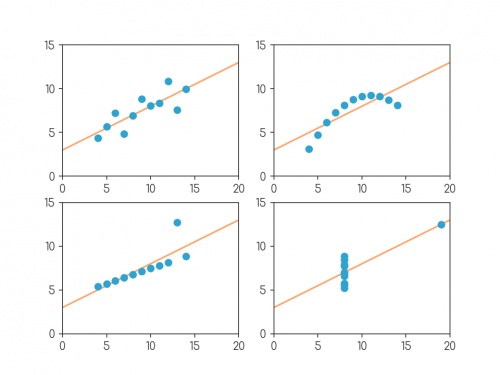

In 1972, four datasets with a similar aim were publised. Graphs in statistical analysis by Francis J Anscombe [1] contained four datasets that have become known as Anscombe's quartet: they all have the same

mean \(x\)-value, mean \(y\)-value, standard deviation of \(x\)-values, standard deviation of \(y\)-values, linear regression line, as well multiple other values

related to correlation and variance. But if you plot them, the four datasets look very different:

Plots of the four datasets that make up Anscombe's quartet. For each set of data:

the mean of the \(x\)-values is 9; the mean of the \(y\)-values is 7.5;

the standard deviation of the \(x\)-values is 3.32; the standard deviation of the \(y\)-values is 2.03;

the correlation coefficient between \(x\) and \(y\) is 0.816;

the linear regression line is \(y=3+0.5x\);

and coefficient of determination of linear regression is 0.667.

Anscombe noted that there were prevalent attitudes that:

- "Numerical calculations are exact, but graphs are rough."

- "For any particular kind of statistical data, there is just one set of calculations constituting a correct statistical analysis."

- "Performing intricate calculations is virtuous, actually looking at the data is cheating."

The four datasets were designed to counter these by showing that data exhibiting the same statistics can actually be very very different.

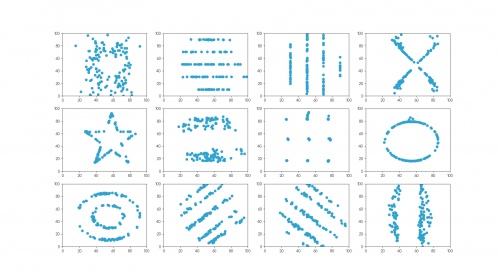

The datasaurus dozen

Anscombe's datasets indicate their point well, but the arrangement of the points is very regular and looks a little artificial when compared with real data sets.

In 2017, twelve sets of more realistic-looking data were published (in Same stats, different graphs: generating datasets with varied appearance and identical statistics through simulated annealing by Justin Matejka and George Fitzmaurice [2]).

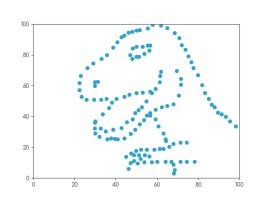

These datasets—known as the datasaurus dozen—all had the same

mean \(x\)-value, mean \(y\)-value,

standard deviation of \(x\)-values, standard deviation of \(y\)-values, and corellation coefficient (to two decimal places) as the datasaurus.

The twelve datasets that make up the datasaurus dozen. For each set of data (to two decimal places):

the mean of the \(x\)-values is 54.26; the mean of the \(y\)-values is 47.83;

the standard deviation of the \(x\)-values is 16.76; the standard deviation of the \(y\)-values is 26.93;

the correlation coefficient between \(x\) and \(y\) is -0.06.

Creating datasets like this is not trivial: if you have a set of values for the statistical properties of a dataset, it is difficult to create a dataset with those properties—especially

one that looks like a certain shape or pattern.

But if you already have one dataset with the desired properties, you can make other datasets with the same properties by very slightly moving every point in a random direction then

checking that the properties are the same—if you do this a few times, you'll eventually get a second dataset with the right properties.

The datasets in the datasaurus dozen were generated using this method: repeatedly adjusting all the points ever so slightly, checking if the properties were the same, then

keeping the updated data if it's closer to a target shape.

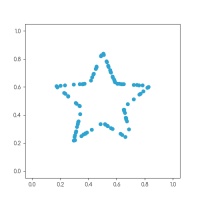

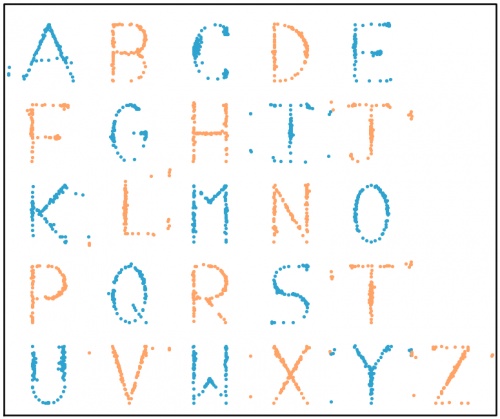

The databet

Using the same method, I generated the databet: a collection of datasets that look like the letters of the alphabet. I started with this set

of 100 points resembling a star:

After a long time repeatedly moving points by a very small amount, my computer eventually generated these 26 datasets, all of which have the same means,

standard deviations, and correlation coefficient:

The databet. For each set of data (to two decimal places):

the mean of the \(x\)-values is 0.50; the mean of the \(y\)-values is 0.52;

the standard deviation of the \(x\)-values is 0.17; the standard deviation of the \(y\)-values is 0.18;

the correlation coefficient between \(x\) and \(y\) is 0.16.

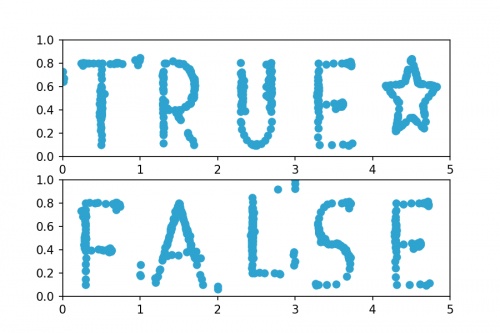

Words

Now that we have the alphabet, we can write words using the databet. You can enter a word or phrase here to do this:

Given two data sets with the same number of points, we can make a new larger dataset by including all the points in both the smaller sets.

It is possible to write the mean and standard deviation of the larger dataset in terms of the means and standard deviations of the smaller sets: in each case,

the statistic of the larger set depends only on the statistics of the smaller sets and not on the actual data.

Applying this to the databet, we see that the datasets that spell words of a fixed length will all have the same mean and standard deviation.

(The same is not true, sadly, for the correlation coefficient.) For example, the datasets shown in the following plot both have the same means and standard deviations:

Datasets that spell "TRUE☆" and "FALSE". For both sets of (to two decimal places):

the mean of the \(x\)-values is 2.50; the mean of the \(y\)-values is 0.52;

the standard deviation of the \(x\)-values is 1.42; the standard deviation of the \(y\)-values is 0.18.

Hopefully by now you agree with me that Anscombe was right: it's very important to plot data as well as looking at the summary statistics.

If you want to play with the databet yourself, all the letters are available on GitHub in JSON format.

The GitHub repo also includes fonts that you can download and install so you can use Databet Sans in

your next important document.

References

[1] Graphs in statistical analysis by Francis J Anscombe. American Statistician, 1973.

[2] Same stats, different graphs: generating datasets with varied appearance and identical statistics through simulated annealing by Justin Matejka and George Fitzmaurice. Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, 2017.

(Click on one of these icons to react to this blog post)

You might also enjoy...

Comments

Comments in green were written by me. Comments in blue were not written by me.

Add a Comment